Evaluating generative video models remains an open problem. Reference-based metrics such as

Structural Similarity Index Measure (SSIM) and Peak Signal to Noise Ratio (PSNR) reward pixel

fidelity over semantic correctness, while Fréchet Video Distance (FVD) favors distributional

textures over physical plausibility. Binary Visual Question Answering (VQA) based benchmarks

like VBench 2.0 are prone to yes-bias and rely on low-resolution auditors that miss temporal

failures. Moreover, their prompts target a single dimension at a time, multiplying the number

of videos required while still not guaranteeing reliable results.

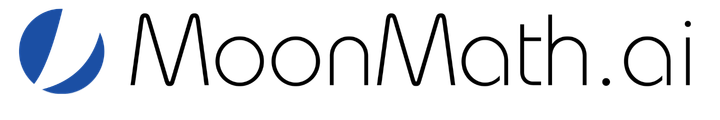

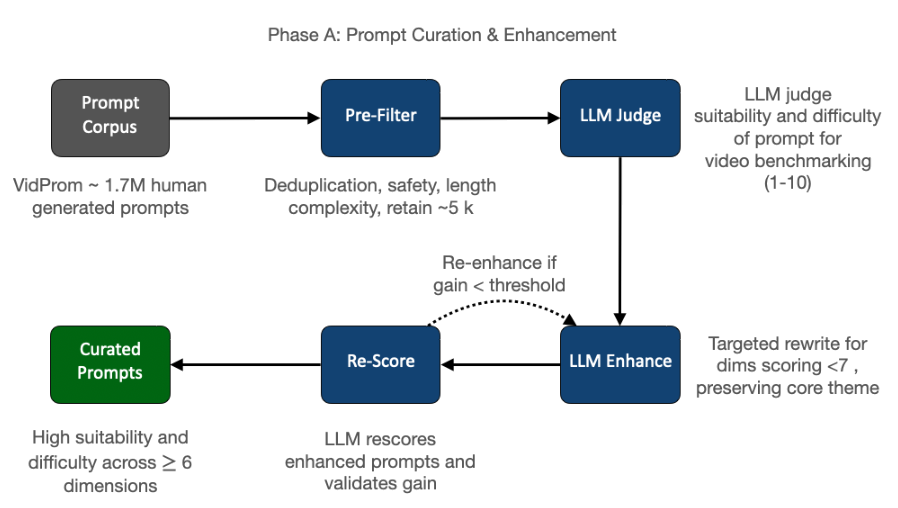

WorldJen addresses these limitations directly. Binary VQA is replaced with Likert-scale

questionnaires graded by a VLM that receives frames at native video resolution. Video generation

costs are addressed by using adversarially curated prompts that are designed to exercise up to

16 quality dimensions simultaneously. The framework is built around two interlocking contributions.

First, a blind human preference study is conducted, accumulating 2,696 pairwise

annotations from 7 annotators with 100% pair coverage over 50 of the curated prompts × 6

state-of-the-art video models. A mean inter-annotator agreement of 66.9% is achieved and the

study establishes a human ground-truth Bradley–Terry (BT) rating with a three-tier structure.

Second, a VLM-as-judge evaluation engine using prompt-specific, dimension-specific Likert

questionnaires (10 questions per dimension, 47,160 scored responses) judges the videos and

reproduces the human-established three-tier BT rating structure independently. The VLM

achieves a Spearman ρ̂ = 1.000, p = 0.0014 that is interpreted as tier agreement with the human results.

Three focused ablation studies validate the robustness of the VLM evaluation

framework.